This Review gives an overview of intersting stuff I stumbled over which are related to machine learning. Most of it was posted in KITs machine learning group (on Facebook).

New Developments

- Random forests for courier detection: Has a rampaging AI algorithm called Skynet really killed thousands in Pakistan?

Live Demos and Websites

Quickdraw

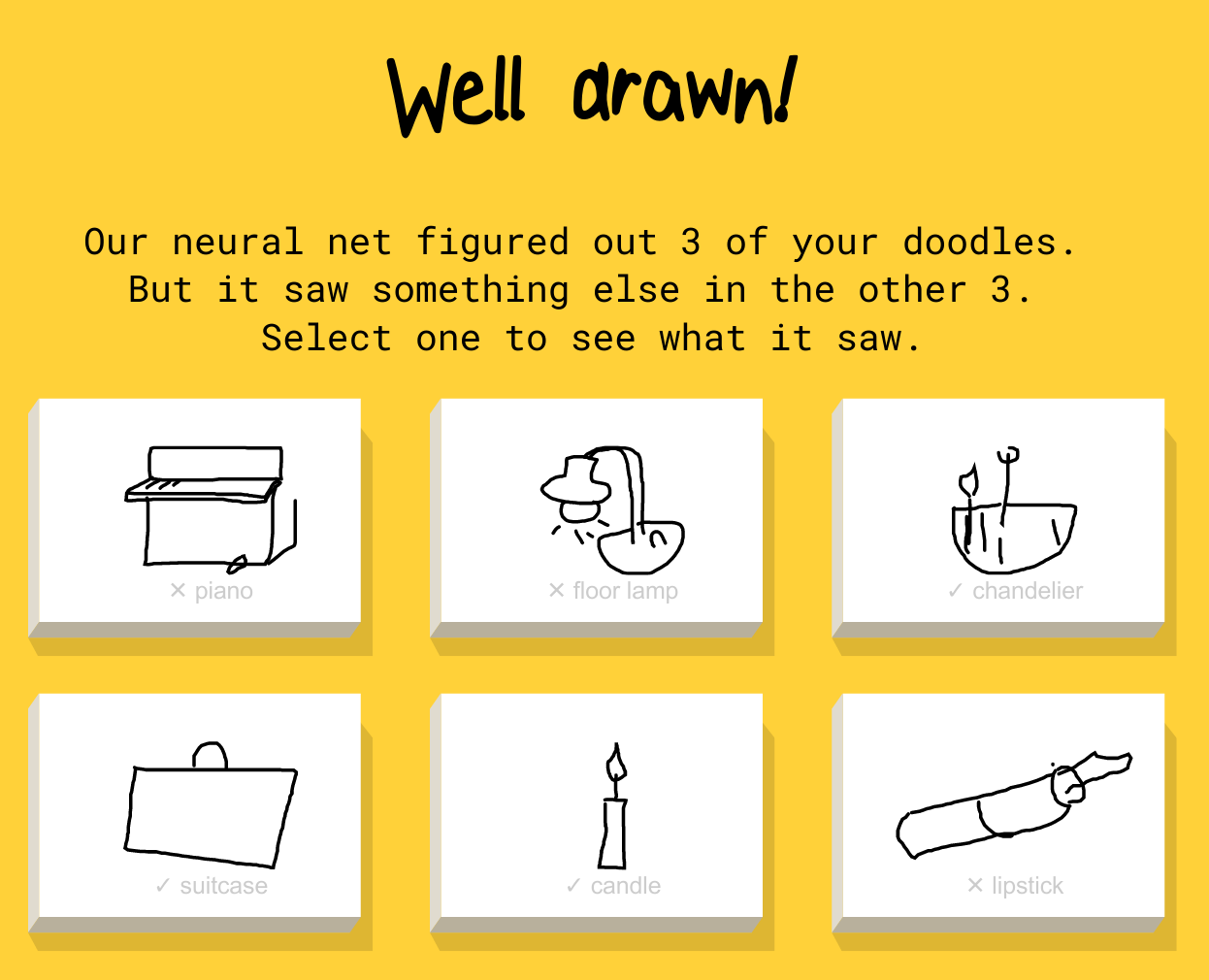

Quickdraw is a program which tries to guess what you drew. However, it is difficult to check if they really apply machine learning, because it tells you what to draw and then tries to recognize it.

It looks very much like an attempt to get lots of training data. However, this plan might not work that well: Interesting Quickdraw Fails

You might find more stuff like Quickdraw on aiexperiments.withgoogle.com.

Loss Functions

lossfunctions.tumblr.com is a blog created by Andrej Karpathy where he collects - well, let's call them "interesting" - loss functions.

Publications

Deep Neural Networks are Easily Fooled

The input of CNNs for image classification can be manipulated in two ways:

- An image, on which a human does not recognize anything (e.g. white noise) gets a high score for some object class.

- An image on which a human is certain to recognize one class (e.g. "cat") is manipulated in a way that the CNN classifies with high certainty something different (e.g. "factory").

See also:

- Anh Nguyen, Jason Yosinski, Jeff Clune: Deep Neural Networks are Easily Fooled: High Confidence Predictions for Unrecognizable Images on arxiv.

- Evolving AI Lab: Deep Neural Networks are Easily Fooled on YouTube in 5:33 min.

- Google: Inceptionism: Going Deeper into Neural Networks. 17.06.2016.

Breaking Linear Classifiers on ImageNet

Andrej Karpathy has once again written a nice article. The article describes the problem that linear classifiers can be broken easily.

Hinton commented something simmilar on Reddit.

Where am I?

One gives the neural network a photo and it tells you where it was taken.

LIME

"Why Should I Trust You?": Explaining the Predictions of Any Classifier deals with the problem of analyzing black box models decision making process.

Lip Reading

See the paper LipNet: Sentence-Level Lipreading for details.

More

- Learning to Protect Communications with Adversarial Neural Cryptography

- 2016 Report: One Hundred Year Study on Artificial Intelligence (AI100)

Software

Seaborn

Seaborn is a Python package for the visualization of data and statistics.

See stanford.edu/~mwaskom/software/seaborn.

RecNet

Jörg made recnet publicly available. It is a framework based on Theano to simplify the creation of recurrent networks.

Image Segmentation Using DIGITS 5

I didn't try it by now, but the images in the article Image Segmentation Using DIGITS 5 look awesome. I would be happy to hear what you think about it.

Keras.js

Run Keras models (trained using Tensorflow backend) in your browser, with GPU support. Models are created directly from the Keras JSON-format configuration file, using weights serialized directly from the corresponding HDF5 file.

See github.com/transcranial/keras-js for more.

Interesting Questions

- When being in a perfect “Long Valley” situation, does momentum help?

- Are non-zero paddings used?

- Why do CNNs with ReLU learn that well?

- Is there a metric for the similarity of two image filters?

Miscallenious

- Why robots, not trade, are behind so many factory job losses

- halite.io: A website for ML challenges.

- Google DeepMind's next gaming challenge: can AI beat StarCraft II? (and the post on deepnet)

- All it takes to steal your face is a special pair of glasses - and the paper Accessorize to a Crime: Real and Stealthy Attacks on State-of-the-Art Face Recognition

- When A Machine Learning Algorithm Studied Fine Art Paintings, It Saw Things Art Historians Had Never Noticed

- Image Synthesis from Yahoo's open_nsfw

- Cops have a database of 117M faces. You're probably in it

- Ants Challenge - Part I: identify and track individual ants over time; recognize when ants engage in food transfer

- Five years of observations from tandem satellites produce 3D world map of unprecedented accuracy

- Stealing Machine Learning Models via Prediction APIs

- DeepMind’s AI has learned to navigate the Tube using memory

Meetings

- London, 7. April 2016: Deep Learning in Healthcare Summit (Link)